What Raycast's rewrite can teach product teams about architecture in the age of agents

Raycast published a technical deep dive last week about rebuilding their desktop app from scratch. The title was understated. The implications were not.

On the surface, it is a story about going cross-platform. A macOS-only Swift app, rewritten as a four-layer hybrid stack so it can run on Windows too. The kind of engineering blog post that normally gets shared by a few hundred infrastructure nerds and then fades.

But read it again and something sharper emerges. The team did not just rebuild for a new platform. They rebuilt for a world where software is operated by something other than human hands. And the architecture they chose says something about what that world expects from the applications that live in it.

The core idea is simple enough to fit in one sentence: UI and AI agents should be equal callers of your application's capabilities. The user clicking a button and the agent sending a request should travel through the same path. One consumer, two consumers, it makes no difference at the architecture level.

This sounds obvious. Almost nobody builds this way.

The old architecture ran out of room

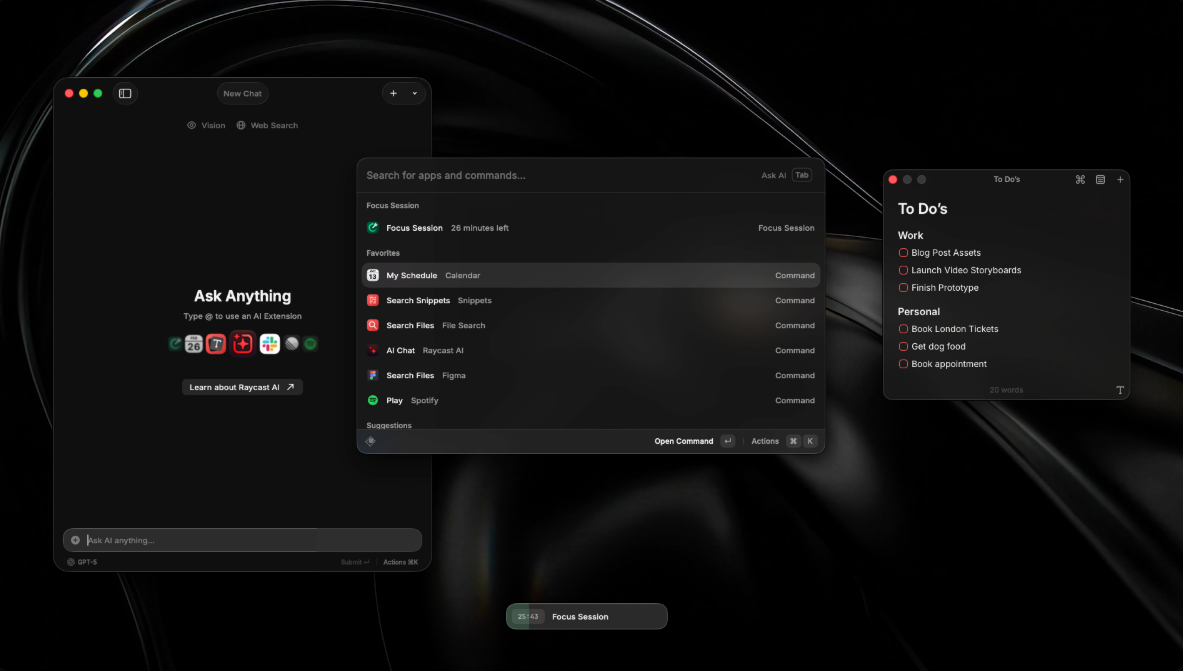

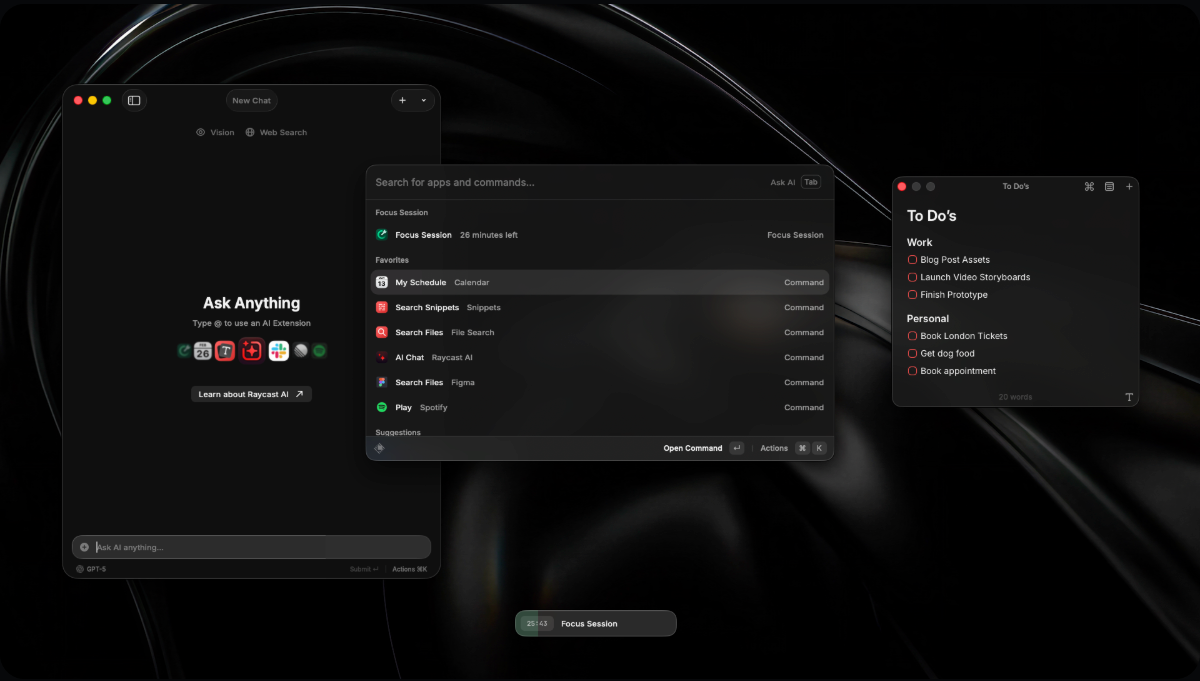

Raycast v1 was a clean piece of work. Swift. AppKit. Tight integration with macOS. It launched in 2020 as a launcher replacement and did that job well. Users liked it. Performance was good. Memory stayed around 200 to 300 MB.

The problem was not that v1 broke. The problem was that the product outgrew the architecture.

A launcher became a platform. AI Chat showed up. Then Notes. Then an Extension Store with hundreds of community extensions. Cloud Sync. File Search. Each feature got bolted onto a skeleton designed for a much narrower job. Compile times crept upward. Changing one feature meant touching modules that had no business knowing about each other. The team had trouble hiring engineers with deep AppKit expertise.

But the real ceiling was more fundamental than any of that. In v1, business logic lived inside the UI layer. You wanted to trigger a feature? The path went through the view. You wanted a script to interact with your data? Good luck. Every capability the application possessed was buried inside a ViewController somewhere, reachable only by a human hand guiding a cursor.

That was fine in 2020. In 2026, it is a liability.

The team understood this. They did not try to paper over it with a plug-in or a bolt-on API layer. They tore the whole thing down and started over.

Four layers, one rule

The new architecture splits Raycast into four layers. Each one talks to the others through typed IPC, with compile-time guarantees across all four runtimes.

The Host App handles windows, hotkeys, the menu bar, the tray icon, and loading the WebView. On macOS that means Swift and AppKit. On Windows, C# with .NET 8 and WPF. Nothing resembling business logic lives here.

The Web Frontend is React and TypeScript, shared across both platforms. Every window gets its own entry point. This layer renders pixels and handles input. That is it.

The Node Backend runs as a single long-lived process. All business logic. The database. The extension runtime. Every service the application provides. This is where the actual work happens.

The Rust Core handles data, the cloud sync schema, and a custom file indexer built from scratch.

This arrangement has a specific consequence: business logic no longer lives inside the UI. It lives in a separate process that exposes its capabilities through a typed interface. The UI calls that interface. Extensions call that interface. And there is nothing stopping an external process from calling it too.

The user clicking "search files" and an AI agent issuing a search command travel through the same IPC channel. The Node process does not know which one initiated the request. It does not need to know.

This is the architecture you build when you stop thinking of your application as something a person operates and start thinking of it as a set of capabilities that can be composed and called by whoever needs them.

Most AI features are glued on

The standard playbook for adding AI to an existing application goes like this: add a chat dialog to the UI, wire it to a large language model, and hard-code a handful of intent mappings. The user says "find my files," the intent router maps it to the file search module. The user says "create a reminder," the intent router maps it to reminders.

It works for demos. It breaks at scale.

Every new AI capability means touching the dialog layer's code. The intent mapping table grows. Edge cases multiply. Error handling, already tricky when the model's output is nondeterministic, scatters across modules. And the things users actually want tend to span multiple capabilities. "Go through my meeting notes from last week, pull out the action items, and add them to my calendar." That kind of compound request is a nightmare to route through hard-coded intent maps.

The bottleneck is not the model. The bottleneck is that your application never gave the model a clean interface to work with.

Raycast's extension system points toward a different approach. Extensions run inside the Node process. They have typed input schemas and typed outputs. They do not depend on the UI being visible, or even loaded. If you already have a system of discoverable, typed, composable commands, adding a tool for an AI agent is not a product initiative. It is just registering one more entry in a list the system already maintains.

The agent does not need to understand your UI layout. It does not need to simulate clicks or wait for animations to finish or parse text out of labels. It sends a correctly typed IPC message. The same one the UI would send if a human had clicked the button.

Equal callers. That is the whole thing.

The file indexer is not for humans

The Rust indexer gets a lot of space in the blog post. It deserves it. On Windows, the team reads the NTFS Master File Table directly, scanning entire drives in seconds rather than minutes. It is among the more hardcore pieces of systems programming you will see in a product blog this year.

But the implementation, impressive as it is, is not the interesting part. The philosophy behind it is.

Traditional desktop search systems were designed for a human at a keyboard. Type a filename, get a result. That works if the consumer is a person who knows what they are looking for. It falls apart if the consumer is an agent that needs to answer a question like "which PDFs modified this week contain contract language about liability caps."

That kind of query needs structure. It needs metadata. It needs cross-application context. The file is a PDF, it lives in a project folder, it was attached to an email from legal, and the email thread mentions a deadline. None of these connections exist in a traditional file index.

Raycast's indexer allows any extension to register its own data schema. Contacts, notes, calendar events, clipboard history, code snippets. Different structures, different sources, one unified index. One query engine.

A human experiences this as faster search. An agent experiences it as structured memory it can actually reason over.

We spend enormous energy debating RAG architectures, vector databases, and context window management. Far less energy goes into a prior question: what would your application's data layer look like if you designed it from the start for a non-human intelligence to query efficiently? The indexer is not a complete answer to that question. But it is asking it, which puts it ahead of most.

What 150 megabytes actually buys you

The blog post is unusually honest about the trade-offs. v2 uses 350 to 450 MB of memory. v1 used 200 to 300. That is a real increase, and the team says so directly. No apology. No deflection. "We're actively working on optimizing this."

A conventional performance review would flag this as a regression. Users will not celebrate an extra 150 MB of RAM usage. They might not even notice it on modern machines. But it is not nothing.

The mistake would be treating memory as the only currency on the balance sheet.

What does that 150 MB pay for? A cross-process typed IPC channel that works across four different runtimes. A long-lived Node process running the extension runtime and all business logic. A Rust data layer with a custom file indexer that reads raw filesystem tables. And the property that matters most: every capability the application has can be called by something other than a human clicking a button.

The standard performance scorecard has no column for "programmability." It has no row for "agent-operability." Not because these things do not matter. Because almost no desktop application has ever bothered to treat them as first-class concerns. The metrics we track reflect the architecture we assume, and the architecture we assume is one where a human is the only consumer.

Raycast's bet is that this assumption has a shelf life. When AI agents begin to saturate the desktop operating system, applications that cannot be called programmatically will be blind spots. The ones that can will be infrastructure.

The 150 MB is not a performance regression. It is a down payment on not having to rebuild again in three years.

The hard part is knowing when

The most instructive part of this story has nothing to do with technology. It is about timing.

Raycast was not forced into this rewrite. The product was growing. Users were happy. Metrics were healthy. By every conventional measure, now was the worst possible moment to tear down the foundation and start over.

They did it anyway. Not because the old architecture was broken. Because its limits were already visible.

Most teams wait until technical debt is actively hurting delivery velocity. By then the window is narrow. You do not get to build the right architecture. You get to build the one that fits inside the quarter. Compromises stack on compromises. You ship something that looks like the old thing but with a new coat of paint, and you tell yourself you will do the real work next time.

Next time never comes.

The smarter read on Raycast's decision is this: AI agents are not a feature request. They are an architecture requirement. If you treat them as a feature, you add a chat box and call it done. If you treat them as an architecture requirement, you ask whether every capability in your application is exposed as a discoverable, composable, typed command.

The first approach is faster today. The second one survives tomorrow.

The window for making this choice is not infinite. Agent penetration of desktop operating systems is happening whether your application is ready or not. If you wait until users start asking why ChatGPT cannot operate your app, the only answer you will be able to give is a fragile glue layer. Accessibility APIs. Screen recognition. Coordinate-based clicking. Hacks on top of hacks to operate an interface that was never designed to be operated by anything but a person.

Those are not solutions. They are what you do when you ran out of time.

What to take from this

Three things stick after reading the Raycast post.

The first is that the team did not graft AI onto the old architecture. They rebuilt the architecture to be legible to AI from the ground up. Extension runtime, typed IPC, decoupled backend. Each piece makes the system more callable by something other than a UI. Taken together, they form a coherent answer to a question most product teams have not seriously asked yet.

The second is that they did not hide the cost. They stated it plainly and moved on. That kind of candor is rare in technical writing. Most blog posts either ignore the trade-offs or wrap them in enough qualifiers that the reader cannot tell what the actual numbers are.

The third is that the logic is internally consistent. If you want the extension ecosystem to keep growing, and you want AI agents to treat your application as a first-class tool, then the backend has to be decoupled from the UI, the data layer has to be machine-friendly, the IPC has to be typed, and the extension runtime has to be discoverable. These pieces lock together. Remove any one and the whole thing wobbles.

For teams building products in the age of agents, this post is not a template. Nothing is settled enough to serve as a template. But it is a reference point for three questions that matter more than your choice of framework or model provider: How do you tell whether a technology shift demands a feature response or an architecture response? What are you willing to pay for programmability? And when are you going to start?

May 2026